What Would it Be Like to Do Functional Programming in Go?

By Noam YadgarDisclaimer: Go is not a pure functional programming language. This article does not try to teach you functional programming in Go since imposing functional programming concepts, may result in unnecessarily awkward code. Go has its style, and it’s proven to be highly successful. Therefore, there’s no point in forcing a foreign dialect on Go code. Haskell would be a great choice if you want to express yourself functionally.

This article aims to provide imperative programmers a bridge to the functional world. Crossing that bridge and seeing the other side can help us reimagine problem-solving and add new skills to our set. We will encounter Go translations to some original Haskell code, hoping this will allow us to understand the original dialect.

Speaking about translation, if you’ve asked me to compare programming languages to spoken languages, I’d say that Go is a dialect of C. Haskell, however, is not a dialect of C. It’s much easier to translate one dialect to another when the two dialects are of the same language; it’s more difficult when they’re not. Translation can either lose information or bend the cultural perception of the original content. I will do my best to provide a balance between the two.

Defining the Pair type

A prevalent thing about programming languages is the use of types. In both Haskell and Go, we can define data types. Let’s define a Pair type, a datatype with a pair of values of some other generic type.

Haskell:

data Pair a = Pair a a

Go:

const ()

X = iota

Y

) // using X and Y as indices for clarity later on

type Pair[a any] [2]a

func NewPair[a any](v1, v2 a) Pair[a] {

return Pair[a]{v1, v2}

}

You might be surprised, but the two examples above are similar in practical terms. The Go code provides more technical specifications because I’m specifying that the two values are also paired together in memory. Notice that in Go, we provide a “constructor” function that returns a Pair. In Haskell, we specify that we can “construct” a Pair of genric type a, by simply referring to Pair a a (we’ll see how this is done later).

Making it a Functor

If your datatype seems to act as a “container” of another generic type, it’s an excellent candidate to be a functor. In this article, we won’t go deep into the definition of a functor, but we’ll simply implement the behavior of a functor on a set of Pair.

In Category Theory, this will be called an endofunctor, a functor that maps a category (in our case, the category of types) to itself.

Haskell:

instance Functor Pair where

fmap f (Pair x y) = Pair (f x) (f y)

-- fmap can also be called with its "infix" operator: <$>

-- fmap f (Pair x y) = f <$> Pair x y

Go:

func Fmap[a, b any](f func(a) b, p Pair[a]) Pair[b] {

return NewPair(f(p[X]), f(p[Y]))

}

The two examples are similar in practical terms. The practical meaning of a functor, is that we can take any function of type (a -> b), apply it to a Pair of a, and get a Pair of b. For example:

isEven := func(x int) bool { return x%2 == 0 }

Fmap(isEven, NewPair(1, 2)) // {false, true}

Or in Haskell:

even <$> Pair 1 2 -- Pair False True

As we will see next, in Haskell, there’s a concept of proving to the compiler that a datatype is a curtain instance of some class, by gradually proving (implementing) its subclasses. So we just proved that a Pair is a Functor.

Making it an Applicative

If our Pair is a Functor, it might also be an Applicative. Applicative is a very efficient class in Haskell; it takes the idea of a Functor one step further. Instead of providing a function of type (a -> b), we will now provide a Pair of functions of type (a -> b). Essentially, chaining pairs of values without ever leaving the functorial context of a Pair. It also provides a way to “lift” a value into a functorial context. In our case, we will lift a value into a Pair context, by using the pure function.

Applicative is very close to be a Monad, which we will not cover in this article.

Haskell:

instance Applicative Pair where

pure x = Pair x x

Pair f g <*> Pair x y = Pair (f x) (g y)

Go:

func Pure[a any](x a) Pair[a] {

return NewPair(x, x)

}

func Apply[a, b any](p Pair[func(a) b], q Pair[a]) Pair[b] {

f := p[X]

g := p[Y] // declaring f and g for clarity

return NewPair(f(q[X]), g(q[Y]))

}

Making (Pair Num) a Num

Here, we observe the true power of functional programming, particularly Haskell expressions. Defining a Pair as an instance of an Applicative is paying off, as we define a Pair of Num as a Num. In the following definition, we’re no longer talking about a Pair of generic type, but specifically, a Pair of any type that satisfies the Num class (any number). Our goal is to prove that a Pair Num is itself a Num.

Haskell:

instance Num a => Num (Pair a) where

p + q = (+) <$> p <*> q

p * q = (*) <$> p <*> q

negate p = negate <$> p

abs p = abs <$> p

signum p = signum <$> p

fromInteger n = pure $ fromInteger n

Now for the Go translation (take your time to digest):

type Num interface {

int | int8 | int16 | int32 | int64 | uint | uint8 | uint16 | uint32 | uint64 | float32 | float64

}

func Add[a Num](p, q Pair[a]) Pair[a] {

opPlus := func(x a) func(a) a {

return func(y a) a {

return x + y

}

}

return Apply(Fmap(opPlus, p), q)

}

func Mul[a Num](p, q Pair[a]) Pair[a] {

opMul := func(x a) func(a) a {

return func(y a) a {

return x * y

}

}

return Apply(Fmap(opMul, p), q)

}

// in haskell it's automatically defined at the class level (p + negate q)

func Sub[a Num](p, q Pair[a]) Pair[a] {

return Add(p, Negate(q))

}

func Negate[a Num](p Pair[a]) Pair[a] {

return Fmap(func(x a) a { return x * -1 }, p)

}

func Abs[a Num](p Pair[a]) Pair[a] {

return Fmap(math.Abs, p)

}

func Signum[a Num](p Pair[a]) Pair[a] {

opSig := func(x a) a {

if x > 0 { return 1 }

if x < 0 { return -1 }

return 0

}

return Fmap(opSig, p)

}

func FromInteger[a Num](n int) Pair[a] {

return Pure(a(n))

}

As you can see, these function compositions are a bit awkward when written in imperative languages like Go. Composition is critical to functional programming, and functional languages excel at expressing function composition. For the imperative programmer, the Haskell code seems to be using magical-syntactic sugar to achieve such a compact definition of the Num (Pair Num). However, this definition truly is a complete one.

Let’s focus on the first function: addition (I’ll leave you the rest as an exercise):

(+) <$> p <*> q

Intuitively and symbolically, I like to think of this expression as if we’re “stitching” two Pair Num together, and our needle is the (+) operator. Going beyond the symbolic meaning of this expression, we can break the expression into 2 parts and analyze them separately:

(+) <$> p -- or: fmap (+) p

We’re mapping a Pair Num to the (+) operator, which in Haskell, is just a regular function. (+) is a binary operator (a function that takes two arguments) and in Haskell, we can write it in an “infix” form like 1 + 1, or in a “prefix” form like (+) 1 1. But there’s more to it. In Haskell, all functions are unary in disguise (they take only one argument). Haskell utilizes an extremely important concept (from Lambda calculus) called currying, which allows us to call (+) with a single argument and return a function of (+) x that waits for the second argument to be complete.

Since:

f <$> Pair x y = Pair (f x) (f y)

Therefore:

(+) <$> Pair x1 y1 = Pair ((+) x1) ((+) y1) -- a Pair of two functions of type Num -> Num

Now, we can replace the first part of the expression so that:

Pair ((+) x1) ((+) y1) <*> q

The Applicative operator of Pair is:

Pair f g <*> Pair x y = Pair (f x) (g y)

Therefore, the entire expression is evaluated as:

Pair ((+) x1 x2) ((+) y1 y2)

-- => Pair (x1 + x2) (y1 + y2)

No magic here, just function composition. We can now interact with Pair Num as if it were a number itself, using simple arithmetic operations.

Pair 1 2 + Pair 3 4 * Pair 5 6 - Pair 7 8 -- Pair 9 18

Or in Go:

Sub(Add(NewPair(1, 2), Mul(NewPair(3, 4), NewPair(5, 6))), NewPair(7, 8)) // {9, 18}

Conclusion

As you can see, even though Go is able to express all of the functional ideas presented in this article, we had to compromise readability and structure to achieve this. This comparison, however, is not fair. It’s completely biased towards Haskell.

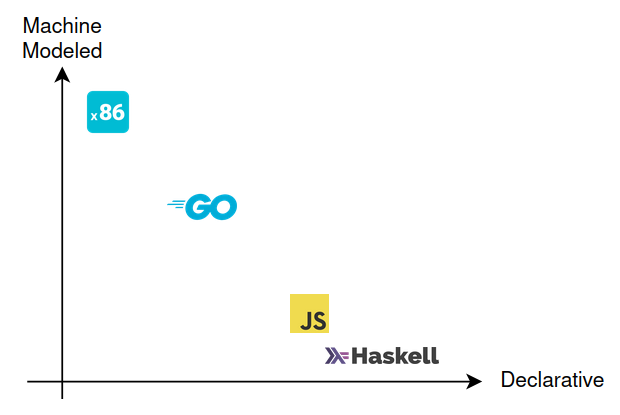

If this article aimed to show implementations of bit-manipulating algorithms, then Go would have been the better choice. Lower-level languages can directly talk about the machine’s infrastructure, while functional programming languages are generally focused on virtual structures. We can put this idea on a scale. One side is a language with a one-to-one model of the machine at the expense of readability and structure when modeling virtual constructs. The other side is a language that lets us easily reason and model virtual constructs at the expense of direct machine control.

Where do we go from here?

I should come clean. Even though I advocate for functional programming, I do most of my programming in Go. Go is proof that simplicity, being highly portable, having exceptional package management concept, built-in tools, and a good ecosystem are integral parts of what makes a programming language useful. When you need to get things done, Go will get you there quickly.

However, I also believe that the future of programming is marching towards declarative-functional languages. Like elevators, which have moved on from having a human operator, or manual gear cars, which have declined (not to mention the rise of self-driving cars), declarative-functional languages further abstract system-level programming, allowing us to focus more on the “what” and less on the “how”.

Of course, this movement toward abstraction is not new. Javascript programmers trust the underlying C++ engine to make optimized decisions, and engineers working on a Javascript engine also trust the C++ compiler to make optimized decisions about the Assembly code. The only difference here is that functional programming has more formalized, restrictive rules about declaratively, as it has its roots in Lambda Calculus, a Turing-complete system based on pure statements and no state. Lambda Calculus is not as physically feasible compared to a Turing Machine (i.e Von Neumann architecture), but if built on top of it, a robust system of logic and computation.